-

Blog

Blog

-

Does Quality Control Really Make Your Code Better?

Does Quality Control Really Make Your Code Better?

The recent blog post ‘Improving Software Quality’ by my colleague Martin showed how we can improve software quality besides just installing tools: We believe that a continuous quality control process is necessary to have a long-term, measurable impact.

However, does this quality control actually have an long-term impact in practice? Or are feature and change requests still receiving more priorities than cleaning up code that was written in a hurry and under time pressure?

With our long-term partner Munich Re, we gathered enough data to show in a large-scale industry case study that quality control can improve the code quality even while the system is still under active development. Our results were accepted to be published as a conference paper at the International Conference on Software Maintenance and Evolution (ICSME).

So what have we learned so far? We know that just installing tools is not enough. Quality will not actually improve. We also know that it is much easier to fix bugs in code you were recently working on rather than in code that you have not touched in ages. But how can we use these insights to integrate quality control in our daily development routine? And who knows if code quality will actually improve then?

Integrating Code Quality in Your Daily Development

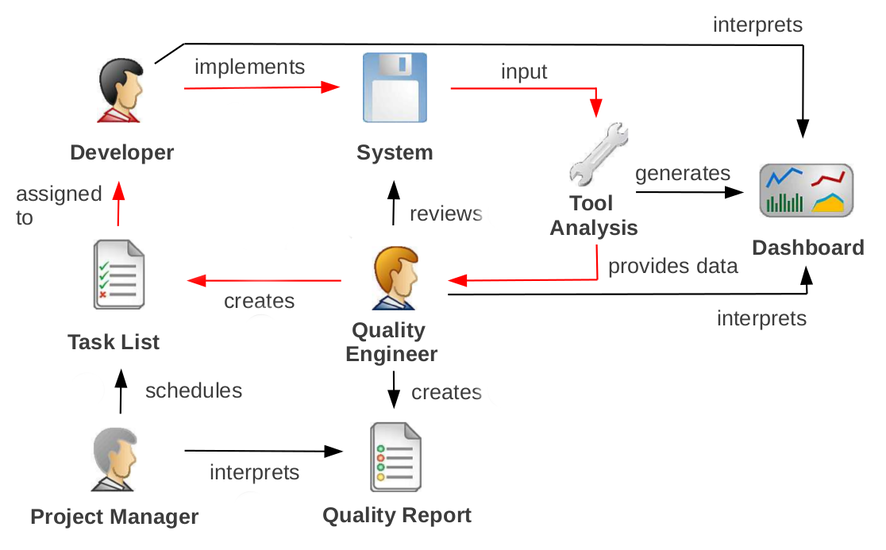

This is how we believe you can make it work. It is simple, but not easy, and requires a close cooperation between developers, quality engineers, and management. The following picture illustrates what we do for continuous quality control:

In regular time intervals, the quality engineer reviews the system and the results of the automatic analyses. Based on his review, he creates refactoring tasks for the developers and writes a quality report for the management. The report will be presented and discussed with both developers and managers. The project manager and the quality engineer then discuss the task list together and the manager decides for each task to either accept or reject it. Accepted tasks are then scheduled within the development process just in the same way as change requests are. The tasks follow the quality goal of the project, which may vary in strength depending on the criticality of the project – for example for one project you would like every code that is modified to be cleaned up at the same time whereas for other projects it is enough to only make sure that no new findings are introduced.

This process has several implications:

It is transparent: both developers and managers agree on a common quality goal, they know exactly what measures are used for evaluation and everyone has access to a common quality dashboard.

It is binding: as the project manager schedules the refactoring tasks, he also has to provide the required resources. Developers actually get allocated time to make the code better rather than doing it on the fly.

It is actionable: the quality engineer writes refactoring tasks at implementation level. To make the entire process a success, the quality engineer must have sufficient development skills himself and must have a deep understanding of the system and its architecture.

When introducing this process to your project, it is important that the quality engineer, who can be either internal or external (such as a CQSE consultant), is not perceived as a quality police man. He should rather be perceived as a supporting sparing partner for all quality aspects who makes sure that developers get time to refactor their code and that they do not just have to process change request after change request as fast as possible.

Quality Control at Munich Re

At Munich Re, we have established this process more than five years ago. In the meantime, there are 41 projects under quality control – 32 .NET and 9 SAP software systems mainly developed by professional software service providers on and off the premises of Munich RE. These applications comprise about 11,000 kLoC (8,800 kLoC manually and 2,200 kLoC generated) and are developed by roughly 150 to 200 developers distributed over multiple countries. In all 41 projects, we fulfill the tasks of a quality engineer and operate on site. Due to regular interaction with the quality engineer, developers perceive him as part of the development process.

Case Study

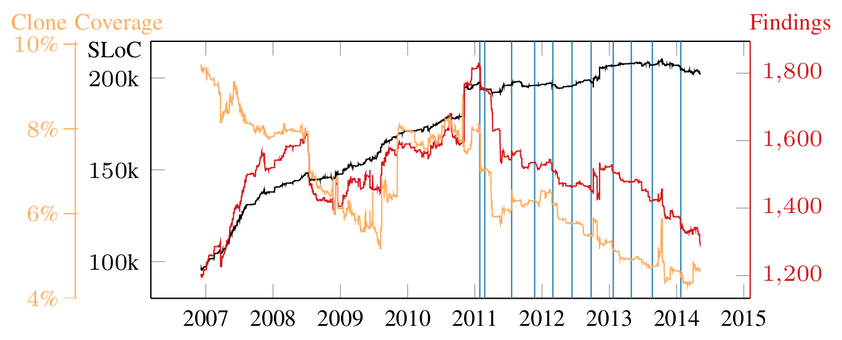

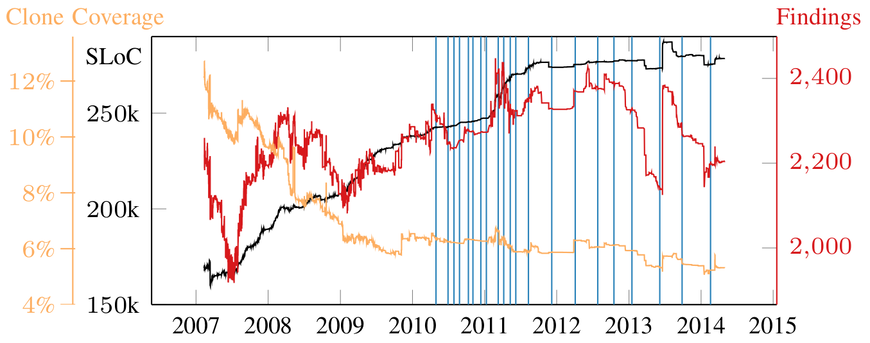

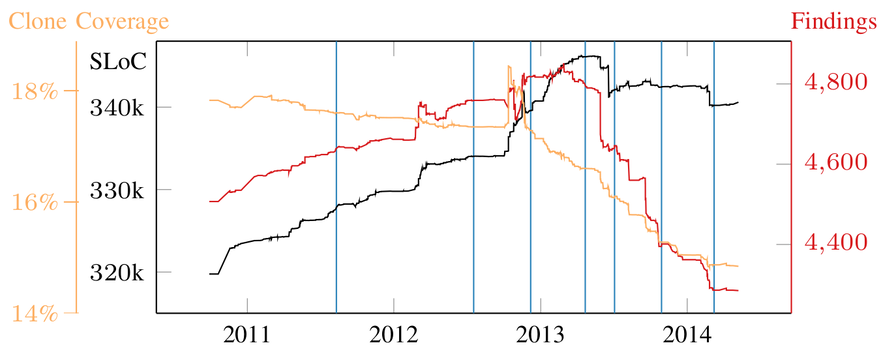

In this blog, we provide data from three systems for which we show the quality evolution before and after quality control. Additional data can be found in the paper. The following figures show the evolution of the system size in black, the trend of the number of findings (i.e. quality defects found) in red, and the clone coverage in orange. The quality controlled period is indicated with a vertical line for each report, i. e., quality control starts with the first report date.

On the first system, our quality control has a great impact: Prior to quality control, the system size grows as well as the number of findings. During quality control, the system keeps growing but the number of findings declines and the clone coverage reaches a global minimum of 5%. This shows that the quality of the system can be measurably improved even if the system keeps growing.

For the next system, quality control began in 2010. However, this system had already been in a scientific cooperation with the Technische Universitaet Muenchen since 2006, in which a dashboard for monitoring the clone coverage had been introduced. Consequently, the clone coverage decreases continuously in the available history. The number of findings, however, increases until mid 2012. In 2012, the project switched to a stronger quality goal. After this change, the number of findings decreases and the clone coverage settles around 6%, which is a success of the quality control. The major increase in the number of findings in 2013 is only due to an automated code refactoring introducing braces that led to threshold violations of few hundred methods. After this increase, the number of findings decreases again, showing the manual effort of the developers to remove findings.

For the third system, the quality control process shows a significant impact after two years: Since the end of 2012, when the project also switched to a stronger quality goal, both the clone coverage and the overall number of findings decline. In the year before, the project transitioned between development teams and, hence, we only wrote two reports (July 2011 and July 2012).

Conclusions

So, does our quality control really make your code better? Yes, it does. And it is measurable – our trends from Munich Re show that the process leads to actual quality improvement. Munich RE decided only recently to extend our quality control from the .NET area to all SAP development. As Munich RE develops mainly in the .NET and SAP area, most application development is now supported by quality control. The decision to extend the scope of quality control confirms that Munich Re is convinced by the benefit of quality control. Since the process has been established, maintainability issues like code cloning are now an integral part of discussions among developers and management.

Related Posts

Our latest related blog posts.