-

Blog

Blog

-

Change-based vs. File-based Code Reviews

Change-based vs. File-based Code Reviews

Many consider manual code reviews to be the premium solution to quality control, as they can locate and eliminate all kinds of issues ranging from functional bugs over security deficits to maintainability problems and have many other benefits as a side-effect. When installing code reviews in your development project, there are a couple of flavours to choose from. This post highlights one of these variation points, namely the granularity of the artifact being reviewed.

What do we review, after all?

When talking about peer reviews that cover every line of code written during development, there seems to be consent that reviews are aligned with change sets. This means that the review is for a set of coherent changes related to the same feature or bug fix. Depending on the tool infrastructure and development process, this could be a single commit to the version control system, a set of commits, or a ticket in the issue tracker, which again is linked to one or more commits. Thus, the scope of the review seems to be well defined, but there is a seemingly small detail that has consequences on the review process and tools used: Does the reviewer review the changes (aka the diff) or the changed files?

Change-based reviews

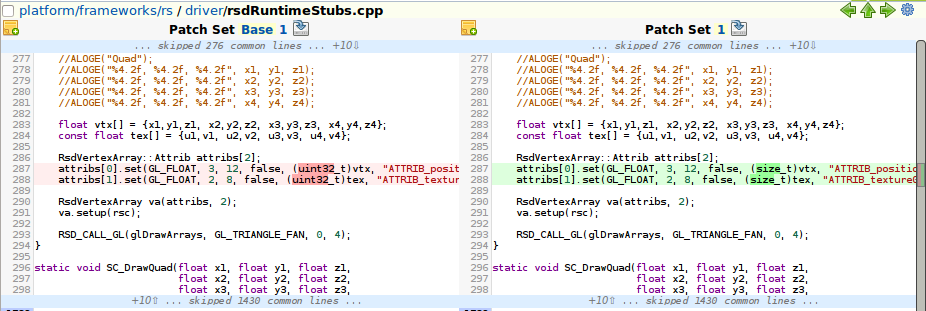

Many of the currently available review tools, such as rietveld or gerrit, are descendants of Google’s Mondrian and inherit its change-based nature. That means that the reviewer focuses on the changes and, consequently, these tools provide a diff view for the changes performed within the commit(s) under review. The information whether a change set has been successfully reviewed is typically stored within the review tool, as many version control systems do not allow to attach this kind of meta-information to a commit.

If you require all code to be reviewed before a release, you have to ensure that all change sets in your release branch are marked as reviewed. Alternatively, many review tools allow pre-commit reviews. Then commits are merged into the release branch only after a successful review, leading to a guaranteed fully reviewed release code base.

File-based reviews

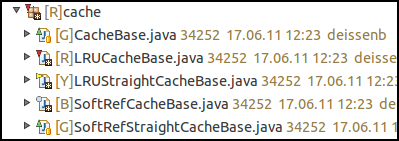

When we introduced systematic peer reviews in our team during the initial development of ConQAT in 2005, the abundance of review tools found these days was not yet available. Hence, we started with the solution we could implement with minimal tool requirements. This was to mark each file as either RED (meaning, in development or failed review), YELLOW (ready for review), or GREEN (successfully reviewed). The information was stored in a special comment that also included the commit id of the commit we changed the color in, allowing to see if there had been non-reviewed changes after the last review. The responsibility of the reviewer is the entire file and the code base is release ready, when all files are marked GREEN (which could be visualized using ConQAT, btw).

While our tools improved (we now use a content MD5 to protect against accidental changes instead of the commit id) and matured (including a custom Eclipse plug-in to visualize and update these file colors), we essentially still use the same process from 2005 for the development of Teamscale.

Is there really a difference?

Yes and no. With change-based reviews, no-one stops you from also looking at the entire file and the tools typically also provide a link to do exactly that. With file-based reviews, of course the reviewer also looks at the diff of each file to better understand the changes made. Still there are a couple of significant differences.

Required tooling

Performing change-based reviews is nearly impossible without additional tools that keep track of commits and reviews of them. With most tools, this means a centralized server that is connected to the version control system. Without a network connection (ever wanted to do your reviews on the plane?) you are usually out of luck. But to be honest, with today’s Internet coverage, this might be a minor problem. Still, you have to find a tool that supports your version control system (or choose the VCS depending on the review tool).

File-based reviews, in contrast, have virtually no requirements in terms of tool support. You can keep both the review status of the file and the review comments within the files. Historization of this information is provided for free by the VCS. Tooling for visualization of review status and updating the status of multiple files can be implemented with minimal effort (our basic review tooling consists of a little more than thousand lines of code). The version control system can be chosen without any constraints. If you want, you can use file-based reviews with patches and tar balls.

Fully reviewed release code base

Checking whether your code base has been completely reviewed and hence is ready for a release is easy in the file-based case. Just check that every file’s status is reviewed, which is typically supported by a tool. With change-based reviews, this is not more complicated, as the review server should provide this information to you.

Where change-based reviews really shine is if you require the current release branch to always contain reviewed code only. Most tools allow to implement so called pre-commit reviews, which allow a commit or merge to the release branch only after a completed review. This keeps the release branch always completely reviewed. The same can be implemented with file-based reviews when using feature branches and merging them only after the feature branch has been completely reviewed. However, it requires more process adherence and does not come as natural as with change-based reviews.

Reviewer responsibility

The reason that kept us using file-based reviews is the question of what the reviewer is responsible for. Is it just to check that a change set is OK, or should the reviewer ensure that the code base (or at least the file) is still OK after the changes are applied? Again, this difference is subtle, but in our experience many changes that are very good individually can in combination lead to maintainability issues, such as unused fields, over time. Using the file-based approach forces a certain mindset to our reviewers. This can lead to improvements in a file that are not directly related to the change under review but might improve the file after many changes have accumulated over time. We are convinced that without this global view, many more quality deficits would creep into our code base over time.

And the winner is …

In this post we highlighted some of the differences between two possible approaches to scope your peer reviews. While the differences can have non-trivial consequences, none of the approaches is better in every situation. If your development infrastructure (especially your VCS) is not supported by existing review tools, you should consider using file-based reviews unless you are willing to write your own tools. Other than that, it boils down to preferences of your team. While we prefer the simpler tooling and the full-file responsibility for our own development, the optional pre-commit reviews of change-based review tools are definitely a useful feature. In the end, it is more important that your team does peer reviews than which kind of review flavour it uses. So get started with introducing reviews in your team right now.

Related Posts

Our latest related blog posts.