-

Cases

Cases

-

Quality Control and Software Audits at LV 1871

Quality Control and Software Audits at LV 1871

Control an application portfolio

Company

The Lebensversicherung von 1871 a. G. München insurance company specialises in innovative permanent disability insurance, life insurance and pension insurance.

LV 1871 develops its central applications in-house based on agile development methods. These include several Java systems, one COBOL system and a variety of proprietary archive and print system extensions.

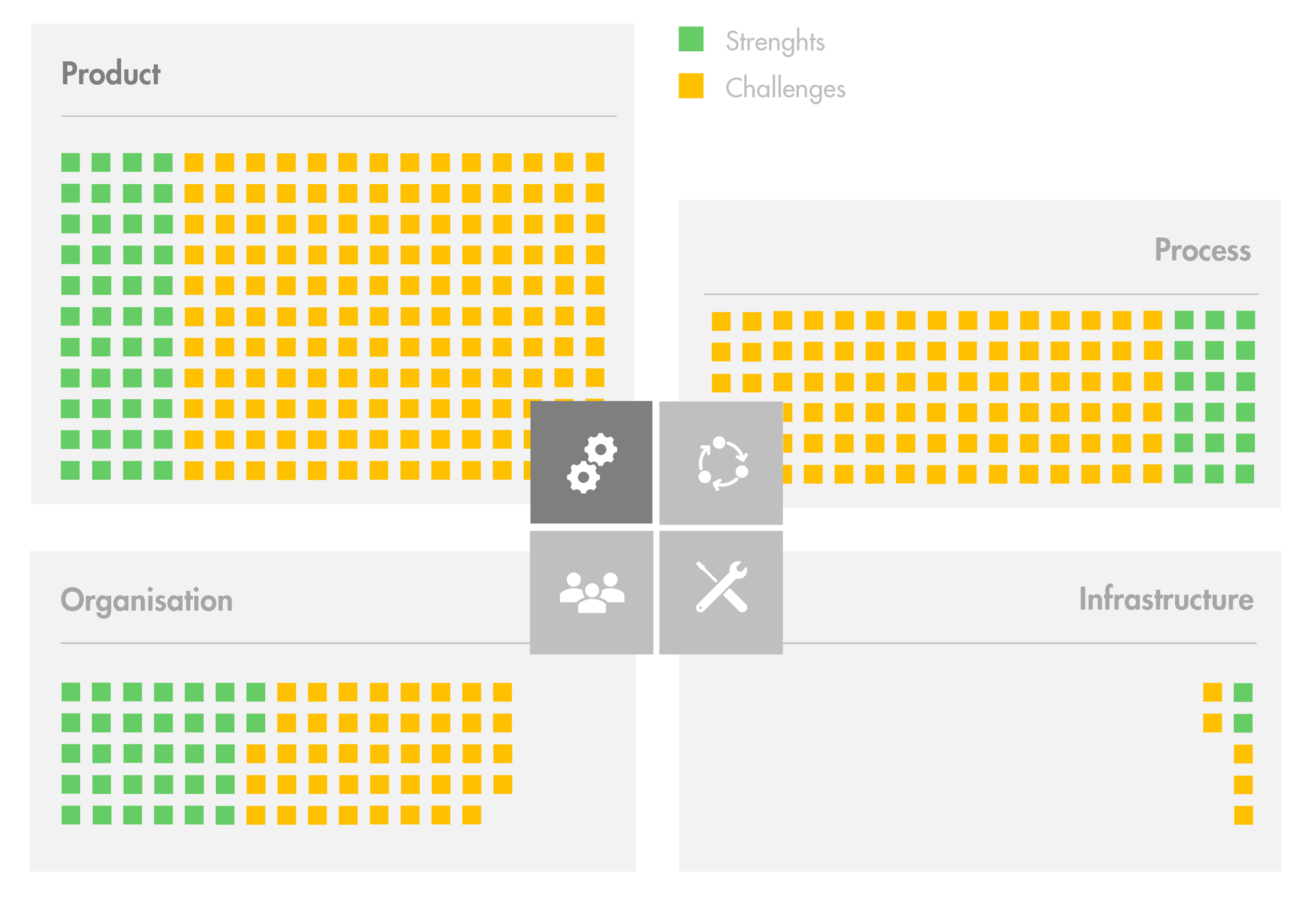

In light of this diverse application landscape, which is common for insurance companies, LV 1871 is interested in a comprehensive overview of the quality of its application portfolio.

Mission

We analyse LV 1871’s central systems using established automated static analyses and manual code reviews.

The code quality of each single system is assessed regarding different aspects, such as structure, redundancy, documentation and common error patterns. In doing so, we do not only examine the current state of their systems but also show quality trends of previous years based on a repository analysis.

We summarise our results in the form of detailed written reports and discuss them with the development teams. Based on this, in cooperation with LV 1871, we identify useful improvement measures and help the teams to apply them.

Benefit

LV 1871 knows the strengths and weaknesses in detail of each inspected system. The company is aware of where the systems stand regarding code quality and how they have evolved to get to that stage. Therefore it will not experience any unpleasant surprises.

Based on the gained knowledge, LV 1871 can derive improvement measures in a targeted way as well as distribute resources and skills as needed across all systems.

In addition, the thorough examination of code quality makes the project’s participants more aware of code quality and thus contributes to a sustainable improvement.

What we did at LV 1871

The code analysis considers well-accepted quality criteria. It is based on automated analysis using Teamscale, which supports analysis of nearly all programming languages. Our experienced auditors validate the results.

Our experienced auditors contribute manual analyses of criteria not coverable by tools, e.g., the quality of comments in the code, and complement automated analyses by an in-depth review of selected source code files.

Together with the development team, we identify and discuss the components of the system and dependencies between them. We also cover deployment aspects and interaction with other systems, as well as properties like scalability, security and performance.

Together with you, we select relevant changes that are likely to be done to your system. We discuss with the development team the impact of these changes and how well they are supported by the architecture.

Software systems typically rely on a large technology stack including programming languages, databases, frameworks, and libraries. We analyze the relevant technologies for associated risks, based on standardized criteria.

The development process itself contributes significantly to the future-proofness of a system. Based on the phases of a typical process, we discuss tools and methods used by the development team to implement changes. The assessment considers industry standards and established best practices.

Publications together with LV 1871

Over 120+ software systems already benefited from an audit by us